There’s a moment every PC builder hits staring at two CPUs with nearly identical prices, where one runs at 4.8 GHz and the other at 3.6 GHz and assumes the answer is obvious. It isn’t. That assumption has led to more wasted money and worse performance than almost any other hardware mistake.

This guide covers everything you actually need to know about CPUs: how they work at the instruction level, what specs mean in practice, why some numbers matter and others mislead, and how to diagnose when a CPU is genuinely limiting your system.

The Job a CPU Actually Does (And Why It’s Harder Than It Sounds)

A CPU (Central Processing Unit) is the component in your computer responsible for executing instructions. Every calculation, every logical decision, every piece of software behavior traces back to the CPU processing a sequence of binary instructions.

But “executing instructions” undersells the complexity. A modern CPU isn’t just following a list sequentially. It’s predicting what instructions will come next, fetching data before it’s requested, executing multiple operations simultaneously, and managing memory hierarchies all within nanoseconds.

The Von Neumann architecture, which underlies virtually every modern CPU, defines a processor as a system that fetches instructions from memory, decodes them, executes them, and stores results. That four-step loop fetch, decode, execute, writeback happens billions of times per second.

What separates a $150 CPU from a $600 one isn’t whether this loop happens. It’s how efficiently it happens, how many loops occur in parallel, and how well the CPU manages the inevitable delays.

Read also: cpu vs microprocessor

Inside the Silicon: What a CPU Is Made Of

Most explanations list CPU components like a parts inventory. That’s the wrong way to understand them. Each component exists to solve a specific problem in the execution pipeline.

The Arithmetic Logic Unit: Where Math Gets Done

The ALU handles all arithmetic (addition, subtraction, multiplication) and logical operations (AND, OR, NOT). When software tells a CPU to add two numbers or compare two values, the ALU is doing the work. Modern CPUs contain multiple ALUs operating in parallel one reason modern processors can handle several operations per clock cycle.

The Control Unit: Traffic Management

The Control Unit directs data flow between the ALU, registers, and memory. It interprets decoded instructions and signals the correct components to act. Without it, the CPU would have raw computing power with no coordination like having a construction crew with no foreman.

Registers: Memory That Lives on the CPU

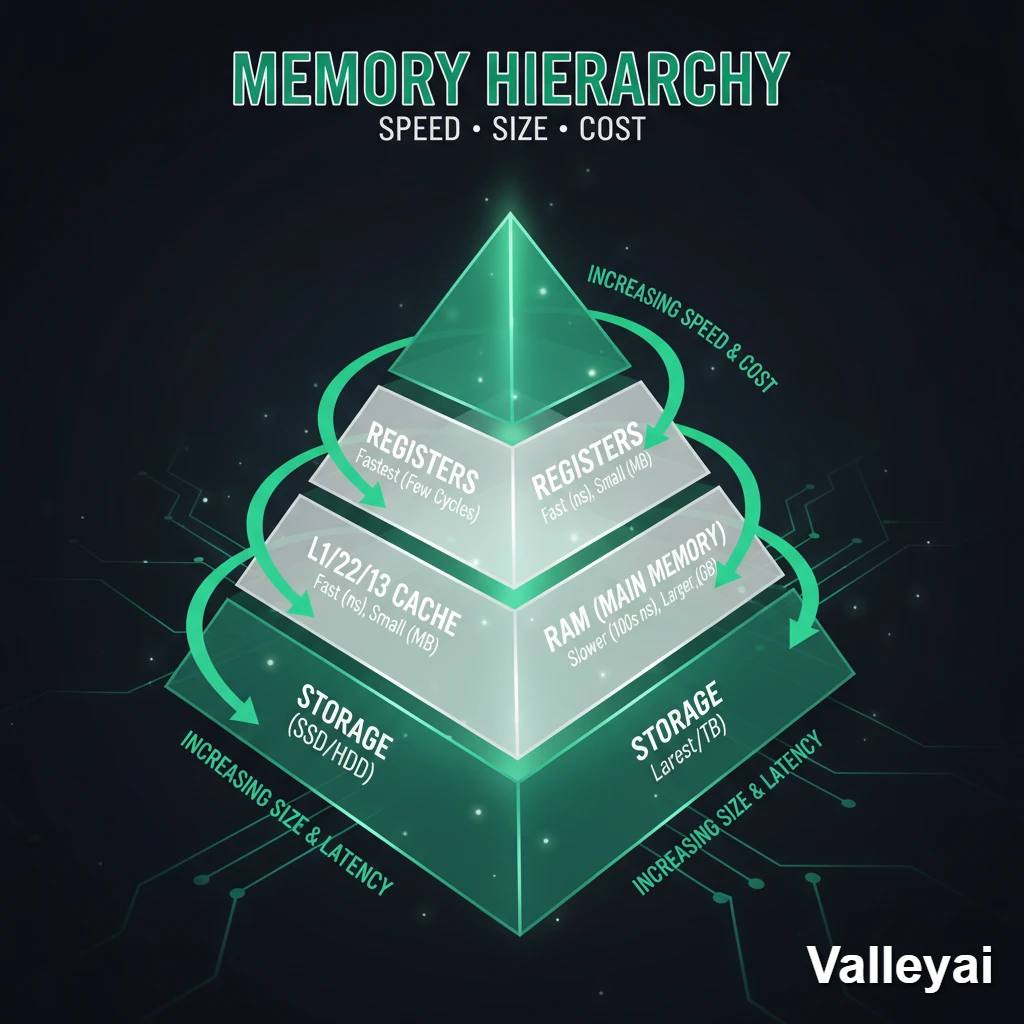

Registers are the fastest memory in your system. Accessing a CPU register takes less than 1 nanosecond. There are only a small number of them typically 16 to 32 general-purpose registers in a modern x86-64 CPU but they’re where active computation happens. Data moves from RAM → cache → registers → ALU and back. Every step in that chain is faster than the last.

The Cache Hierarchy: The Problem Nobody Explains Properly

Cache is where most CPU performance conversations should start but rarely do.

When a CPU needs data, it doesn’t go straight to RAM. It checks its internal cache first and the speed difference between a cache hit and a RAM access is dramatic:

| Cache Level | Typical Latency | Typical Size (per core) |

|---|---|---|

| L1 Cache | ~4 cycles | 32–64 KB |

| L2 Cache | ~12 cycles | 256 KB – 1 MB |

| L3 Cache | ~40–50 cycles | 8–64 MB (shared) |

| Main RAM (DDR5) | ~200+ cycles | 16–128 GB |

Latency figures based on published CPU microarchitecture documentation and Anandtech methodology.

A cache miss at L3 level doesn’t just cause a small delay. It forces the CPU to stall waiting 200+ cycles for data from RAM while it could be executing other instructions. For workloads that repeatedly access the same data (databases, video encoding, gaming), cache size and efficiency matter more than raw clock speed.

This is why AMD’s 3D V-Cache CPUs (like the Ryzen 7 5800X3D) showed dramatic gaming performance improvements over standard chips with higher clock speeds. The extra 64 MB of L3 cache reduced stalls.

Clock Speed Is Not Performance

This is the single most misunderstood concept in CPU purchasing.

Clock speed, measured in GHz, tells you how many cycles per second a CPU can execute. A 4.0 GHz CPU completes 4 billion cycles per second. But it says nothing about how much useful work happens in each cycle.

That measurement is IPC (Instructions Per Clock). And it’s the number that actually determines performance.

A CPU running at 3.5 GHz with an IPC of 12 instructions per cycle delivers more throughput than a 4.5 GHz CPU with an IPC of 8. The math:

- CPU A: 3.5 GHz × 12 IPC = 42 billion instructions/second

- CPU B: 4.5 GHz × 8 IPC = 36 billion instructions/second

CPU A wins. And CPU A might be from a newer architecture despite the lower clock speed.

This is exactly what happened with the Intel 12th generation (Alder Lake) launch. Intel’s Core i9-12900K at 3.2 GHz base clock outperformed the previous-generation Core i9-11900K at 3.5 GHz base clock in nearly every benchmark because Alder Lake’s IPC improvement was roughly 19% over Rocket Lake.

The practical implication: Never compare clock speeds across CPU generations or brands. GHz comparisons are only meaningful within the same microarchitecture family.

Cores, Threads, and the Parallelism Trap

More cores is better. Sometimes. The answer depends entirely on what you’re running.

What Cores and Threads Actually Are

A core is a physical processing unit. An 8-core CPU has 8 independent execution pipelines.

A thread is a logical execution unit. Through Intel’s Hyper-Threading (or AMD’s SMT — Simultaneous Multithreading), each physical core can handle two threads simultaneously by sharing execution resources. An 8-core CPU with Hyper-Threading presents 16 threads to the operating system.

The nuance most guides miss: two threads on one core don’t equal two full cores. They share the ALU and execution units. When both threads are compute-intensive, they compete for the same resources and performance gains are partial typically 15–30% throughput improvement, not 100%.

How Different Workloads Actually Use Cores

| Workload | Core Utilization Pattern | Practical Recommendation |

|---|---|---|

| Gaming (most titles) | 4–8 cores heavily; remainder largely idle | 6–8 cores sufficient for 1440p gaming |

| 4K Video Encoding (H.265) | All available cores saturated | 12–16 cores show meaningful gains |

| Web Browsing / Office | 1–2 cores, bursty | 4 cores overkill; 6 future-proof |

| 3D Rendering (Blender) | All cores fully utilized | More cores = near-linear improvement |

| Live Streaming (OBS) | 2–4 cores dedicated to encoding | 8+ cores recommended for game + stream |

| Software Compilation | Highly parallel; scales with cores | 12–16 cores beneficial |

Workload core utilization patterns based on published benchmark analysis from Tom’s Hardware and Puget Systems.

Most game engines still aren’t parallelized beyond 8 threads. The developers of Cyberpunk 2077 and Starfield have both noted CPU threading limitations in their engine architecture. Buying a 16-core CPU for gaming gives you minimal gaming benefit but makes sense if you’re also running a stream, recording, or doing content creation alongside gaming.

What TDP Actually Tells You (And What It Doesn’t)

TDP (Thermal Design Power) is measured in watts and represents the maximum heat a CPU generates under sustained load, which cooling must dissipate. A 65W TDP CPU requires a cooler rated for at least 65W. A 125W TDP CPU needs a significantly more capable cooling solution.

What TDP doesn’t tell you: peak power consumption. Modern CPUs, especially Intel’s “K” series and AMD’s “X” series, can boost far beyond their rated TDP for short bursts. An Intel Core i9-13900K has a rated TDP of 125W but can draw over 250W during sustained all-core workloads.

This matters because:

- A cooler “rated for 125W” may not handle the actual 250W sustained load

- Inadequate cooling leads directly to thermal throttling

- Laptop CPUs are especially affected their TDP is constrained by chassis size, not performance targets

When Your CPU Hits Its Limits: Thermal Throttling and Bottlenecks

Two performance problems that look similar but have completely different causes.

Thermal Throttling: The Silent Performance Killer

When a CPU’s temperature reaches its thermal junction limit (typically 90–105°C depending on the chip), it automatically reduces clock speed to lower heat output. This happens in real-time, silently, in 100 MHz steps.

You’ll notice it as:

- Frame rate drops during extended gaming sessions (not just the first 10 minutes)

- Render times that increase over a long job but start fast

- CPU clock speeds in monitoring software that oscillate instead of holding steady

How to diagnose it: Open HWiNFO64 or Intel XTU while running a sustained workload. Watch the “CPU Core Temperature” and “CPU Core Clock” readings simultaneously. If temperature climbs above 90°C and clock speed drops simultaneously that’s thermal throttling, not a failing CPU.

The fix is almost always cooling, not the CPU. A better cooler, reapplied thermal paste (which degrades after 3–5 years), or improved case airflow resolves most throttling scenarios without hardware replacement.

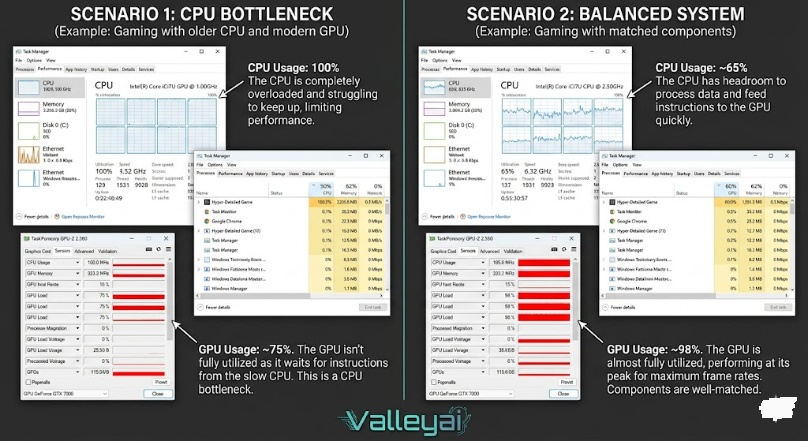

CPU Bottlenecks: When Your GPU Is Waiting

A CPU bottleneck in gaming means your GPU is rendering frames faster than the CPU can feed it draw calls and game logic. The GPU sits idle between frames.

The diagnostic signature:

- GPU utilization: 70–80% (should be 95–99%)

- CPU utilization: 95–100% (the bottleneck)

- Frame times are inconsistent (not just low average FPS)

This pattern is most common at 1080p resolution with high refresh rate targets (144Hz+), because lower resolution reduces GPU load without reducing CPU load. The same CPU that bottlenecks at 1080p/144fps may not bottleneck at 1440p/144fps because the GPU is now working harder.

Counterintuitively, the fastest fix for a CPU bottleneck in gaming isn’t always buying a new CPU. Increasing resolution or enabling higher graphical settings increases GPU load, which can reduce the relative CPU bottleneck without spending anything.

x86 vs ARM: Why This Matters Now, Not Just for Phones

For decades, desktop and laptop CPUs used x86-64 instruction set architecture (ISA) a CISC-based architecture developed by Intel and licensed to AMD. Mobile devices used ARM a RISC-based architecture known for power efficiency.

Apple’s M-series chips changed this conversation permanently.

The Apple M1 (2020) was an ARM-based chip running at modest clock speeds that outperformed Intel’s 10th-generation Core i7 in single-threaded performance while consuming a fraction of the power. The M4 (2024) extends this lead further.

The practical implications for users:

| Factor | x86-64 (Intel/AMD) | ARM (Apple M-series, Qualcomm) |

|---|---|---|

| Software compatibility | Near-universal for Windows/Linux | macOS native; Windows ARM improving |

| Raw performance ceiling | Higher (desktop chips to 350W+) | Lower absolute ceiling, better efficiency |

| Power efficiency | Improving but still higher TDP | Best-in-class per-watt performance |

| Overclocking | Supported on unlocked chips | Not user-accessible |

| Upgrade path | Modular (CPU replaceable) | Integrated SoC (no upgrade) |

The ISA debate isn’t “which is better.” It’s “which is right for your platform.” If you’re building a Windows gaming PC, x86-64 remains the only real option. If you’re buying a Mac for creative work, ARM delivers better performance per watt than any x86 laptop at equivalent thermal envelopes.

Reading CPU Specs Without Getting Lost

When you land on a CPU product page, here’s what actually matters and in what order:

1. IPC Generation (Most Important — Not Listed Directly)

Identify the microarchitecture generation (e.g., “Zen 4,” “Raptor Lake,” “Alder Lake”). Newer generations = higher IPC. A newer-gen CPU at lower GHz often beats an older-gen CPU at higher GHz.

2. Core Count for Your Workload

Use the workload matrix above. Don’t buy 16 cores for gaming. Do buy 12+ cores if you’re encoding video or compiling code.

3. Base Clock vs Boost Clock

Base clock is the sustained frequency under extended load. Boost clock is the short-term peak. Marketing emphasizes boost clock. Real-world performance correlates more with sustained clock speeds under load which depends on your cooling solution.

4. Cache Size

More L3 cache = better for gaming and latency-sensitive workloads. Less impactful for pure throughput tasks like encoding.

5. TDP and Platform

Higher TDP = higher performance ceiling, but requires better cooling. Check socket compatibility with your motherboard before purchasing and check the motherboard’s supported BIOS version for newer CPUs.

6. Price-to-Performance Tier

The best-value CPUs rarely sit at the top of the stack. In 2024, mid-range 8-core CPUs from both AMD and Intel deliver 90%+ of flagship performance at 50–60% of the price.

The CPU Myths That Keep Costing People Money

Myth 1: Higher GHz always means faster performance.

Corrected above. IPC and architecture generation matter more than raw clock speed across different CPU families.

Myth 2: More cores always mean better performance.

Depends entirely on the workload. A 16-core CPU is slower than an 8-core CPU in single-threaded tasks if the 8-core has higher IPC and clock speed. Game engines, in particular, rarely scale beyond 8 threads.

Myth 3: Thermal throttling means your CPU is defective.

Thermal throttling is a designed safety feature. It means your cooling solution isn’t keeping up with your CPU’s heat output. The CPU is working correctly it’s protecting itself.

Myth 4: You need to upgrade your CPU every generation.

A well-chosen CPU from 3–4 years ago still handles most workloads fine. The performance gains between consecutive generations are typically 10–20%. The gains over 3 generations are meaningful (30–50%), but annual upgrades are rarely justified.

Myth 5: Overclocking makes a significant difference.

Manual overclocking on modern CPUs typically yields 3–8% performance gains because CPUs already boost aggressively out of the box. The days of 30% overclock headroom are largely gone. Overclocking primarily makes sense for enthusiasts who want to push every last percentage not for users expecting a transformative performance jump.

Choosing a CPU: The Framework That Actually Works

Most buying guides give you a product list. This is a decision framework you can apply regardless of what’s available when you read this.

Step 1: Define your primary workload.

Gaming, video editing, software development, general productivity, and content creation have different core/thread requirements. Identify your primary use case first.

Step 2: Identify the performance tier you actually need.

If you’re gaming at 1080p/60fps, a 6-core CPU from the last 2 generations is adequate. If you’re rendering 4K video professionally, you need 12+ cores. Be honest about your actual needs, not aspirational ones.

Step 3: Check platform compatibility.

Verify socket, chipset, and BIOS support. If buying an AMD Ryzen 7000 series CPU for an existing AM4 motherboard — it won’t work. AM5 is a different socket.

Step 4: Compare within the same generation.

Only compare CPUs within the same microarchitecture family using GHz as a differentiator. Cross-generation comparisons require benchmark data, not spec sheets.

Step 5: Check thermals and cooling requirements.

If you’re buying a 125W+ TDP CPU, budget for adequate cooling. A $30 stock cooler on a 125W chip will throttle constantly. A $50–80 tower cooler is often the better investment over spending $100 more on a higher-tier CPU that throttles.

Most gaming CPUs are overkill for their marketed use case. A $200 8-core CPU handles 1440p gaming at 144Hz with headroom to spare. The $500 16-core version isn’t buying you gaming performance it’s buying you a streaming rig, a video editing workstation, or future-proofing you may never use.

Frequently Asked Questions

What’s the difference between CPU cores and threads?

Cores are physical execution units. Threads are logical units with Hyper Threading or SMT, each core can handle two threads simultaneously by sharing execution resources. Two threads per core doesn’t double performance; it typically adds 15–30% throughput for multi-threaded workloads.

Is a higher GHz CPU always faster?

No. GHz only measures clock cycles per second. IPC (Instructions Per Clock) determines how much work happens per cycle. A 3.5 GHz CPU from a newer architecture can outperform a 4.5 GHz CPU from an older generation. Always compare CPUs within the same architectural generation using GHz as a differentiator.

What does CPU cache do?

Cache is high-speed memory built into the CPU that stores frequently accessed data. Accessing L1 cache takes ~4 cycles; accessing RAM takes 200+ cycles. Larger cache reduces the frequency of slow RAM accesses, which is why cache size matters for gaming and latency-sensitive workloads.

Why does my CPU get hot under load?

CPUs generate heat as a byproduct of electrical resistance in transistors. Under sustained load, heat output increases. If your cooling solution can’t dissipate heat fast enough, temperatures rise. Above 90–105°C (depending on the chip), the CPU automatically reduces clock speed thermal throttling to protect itself.

How do I know if my CPU is bottlenecking my GPU?

Monitor GPU utilization while gaming. If GPU utilization sits below 85% while CPU utilization is near 100%, your CPU is the limiting factor. This is most common at 1080p with high refresh rate targets. Increasing resolution or graphical settings often reduces the bottleneck without hardware upgrades.

Do I need to upgrade my CPU for gaming?

Not necessarily. If your current CPU is within 2–3 generations of current hardware and you’re hitting 90%+ GPU utilization in games, your CPU is fine. If you’re seeing low GPU utilization and inconsistent frame times, a CPU upgrade may help. Check utilization metrics before spending money.

What’s the difference between x86 and ARM CPUs?

x86 (Intel/AMD) uses a CISC instruction set optimized for raw performance and software compatibility on Windows/Linux. ARM uses a RISC instruction set optimized for power efficiency. Apple’s M-series chips demonstrated that ARM can match or beat x86 in performance per watt. For Windows gaming PCs, x86 remains the standard. For Mac-based creative work, ARM is now the better choice.

The CPU market moves fast, but the underlying principles don’t. IPC, cache efficiency, thermal management, and workload-specific core utilization have governed CPU performance for decades and they’ll govern it for the next decade regardless of which company wins the next benchmark war. Understanding these fundamentals means you’ll make better decisions with every CPU generation that ships, not just the one that’s current when you read this.

Kaleem

My name is Kaleem and i am a computer science graduate with 5+ years of experience in Computer science, AI, tech, and web innovation. I founded ValleyAI.net to simplify AI, internet, and computer topics also focus on building useful utility tools. My clear, hands-on content is trusted by 5K+ monthly readers worldwide.