Most people associate binary code the endless strings of 0s and 1s with modern computer screens, hackers, and the digital revolution. But the true history of binary code does not begin with the invention of the microchip. It begins thousands of years ago in the realms of philosophy, linguistics, and ancient mathematics.

The history of binary is best understood through a three-stage framework we call The Evolution of Duality:

- Philosophical Binary: Ancient civilizations using duality (light/dark, solid/broken) to map the universe and language.

- Mathematical Binary: Enlightenment-era thinkers formalizing these concepts into a rigorous, base-2 number system.

- Physical Binary: Modern engineers translating that math into the electrical pulses that power today’s hardware.

From ancient Indian poetry to the logic gates inside your smartphone, here is the complete history of how the binary number system was invented, refined, and ultimately used to build the modern world.

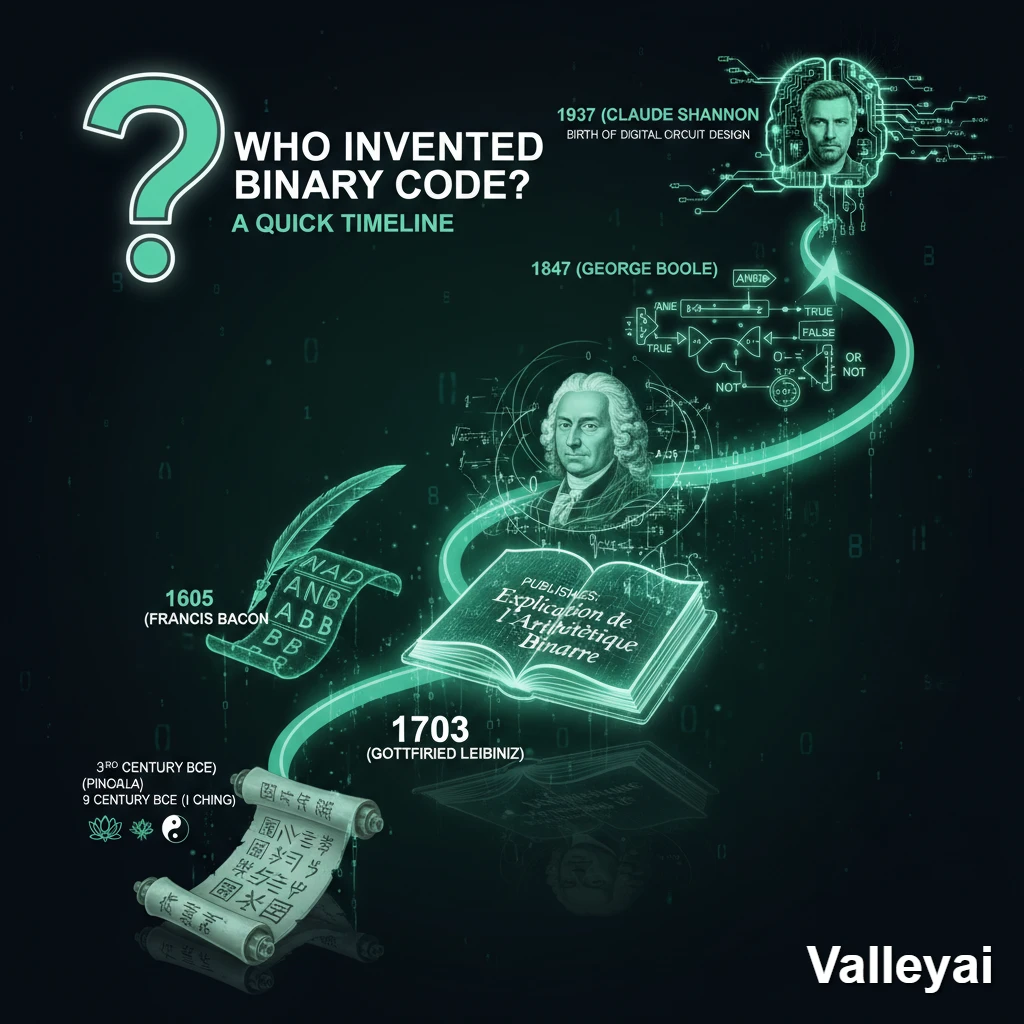

Who Invented Binary Code? A Quick Timeline

Gottfried Wilhelm Leibniz invented the modern binary number system in 1689, formalizing it in his 1703 paper. However, ancient cultures like the Indians and Chinese used binary concepts centuries earlier. Later, Claude Shannon applied this base-2 math to electronic circuits in 1937, creating modern computing.

To understand the full scope of this evolution, here are the critical milestones:

- 3rd Century BCE (Pingala): Ancient Indian scholar describes a binary-like system to classify poetic meters.

- 9th Century BCE (I Ching): Chinese divination text uses 64 hexagrams made of solid and broken lines.

- 1605 (Francis Bacon): Invents a bilateral cipher, proving the alphabet can be reduced to two letters.

- 1703 (Gottfried Leibniz): Publishes Explication de l’Arithmétique Binaire, formalizing the modern base-2 mathematical system.

- 1847 (George Boole): Introduces Boolean algebra, turning binary numbers into logical True/False statements.

- 1937 (Claude Shannon): Proves that Boolean logic can map directly onto electronic relay switches, giving birth to digital circuit design.

The Pre-Digital Era: Ancient Binary Systems

Long before computers, ancient civilizations utilized binary logic to organize information. The earliest known binary systems include the ancient Indian mathematician Pingala’s linguistic rules in the 3rd century BCE and the Chinese I Ching’s 64 hexagrams, which represented philosophical duality using broken and solid lines.

Pingala and the Chandashutram

The earliest conceptual ancestor of binary is found in ancient India. In his treatise, the Chandashutram, the scholar Pingala developed a mathematical system to describe the meter of Sanskrit poetry. He used short syllables (laghu) and long syllables (guru) to create combinations that functioned identically to a modern base-2 (radix-2) system.

The I Ching (Book of Changes)

In ancient China, the philosophical text known as the I Ching mapped the universe using a system of duality: Yin (yielding/dark) and Yang (solid/light). These dualities were stacked into trigrams and hexagrams (six lines). The 64 possible hexagrams perfectly mirror a 6-bit binary system (since $2^6 = 64$).

Did the Ancient Egyptians Use Binary?

A common question is whether the Ancient Egyptians invented binary code. The Egyptians did not use a formal binary number system, but they did use a binary-like method for multiplication. Their system relied on doubling and halving numbers (similar to base-2 positional notation) combined with the Horus-Eye fractions. While mathematically brilliant, it was a calculation technique rather than a universal binary code.

Gottfried Wilhelm Leibniz: The Father of Modern Binary

Gottfried Wilhelm Leibniz is the father of modern binary code because he was the first to formalize a base-2 mathematical system using only 0 and 1. In his 1703 paper, “Explication de l’Arithmétique Binaire,” Leibniz proved that all human mathematics could be represented using just two digits.

How Did Leibniz Discover Binary?

Leibniz did not invent binary in a vacuum. As a philosopher and mathematician, he was obsessed with creating a “Universal Characteristic” a conceptual language that could reduce all human reasoning to pure mathematical calculation.

During his research, Leibniz was introduced to the I Ching by a French Jesuit missionary named Joachim Bouvet. Recognizing the mathematical perfection in the ancient Chinese hexagrams, Leibniz realized that all numbers could be expressed through a simple positional notation of 0s and 1s.

In his 1703 treatise, he laid out the rules for binary addition, subtraction, multiplication, and division. Though he saw its mathematical beauty, Leibniz never built a machine capable of computing in binary; the technology of his era simply did not exist.

The Tangible Transition: From Ciphers to Punch Cards

Before binary code controlled electricity, it controlled physical machines and secret messages. In 1605, Francis Bacon created a bilateral cipher using two letters to encode the alphabet, while the 1801 Jacquard Loom used binary punch cards to dictate fabric patterns, proving base-2 could automate physical hardware.

Francis Bacon’s Bilateral Cipher (The Ancestor to ASCII)

Over a century before Leibniz, Francis Bacon realized that a message could be hidden inside another text by using two distinct typefaces. To do this, he assigned a 5-letter combination of ‘A’s and ‘B’s to every letter of the alphabet.

- A = aaaaa (00000)

- B = aaaab (00001)

- C = aaaba (00010)

Bacon’s cipher is recognized today as the first instance of text-to-binary encoding, serving as the conceptual grandfather to modern encoding standards like ASCII and Unicode.

The Jacquard Loom: The First Physical Binary

In 1801, Joseph Marie Jacquard invented a mechanical loom that used stiff pasteboard cards with punched holes.

- Hole punched: The hook passes through, lifting the thread (State: 1 / On).

- No hole: The hook is blocked (State: 0 / Off).

This was a watershed moment. It proved that binary code was not just for math or secret codes—it could be used as instructions to automate a machine.

Boolean Algebra: Turning Math into Language

In 1847, mathematician George Boole created Boolean algebra, a system that transformed binary math into logical statements. By assigning “1” to True and “0” to False, Boole established the foundational logic gates (AND, OR, NOT) that modern computer processors use to make decisions.

Leibniz proved that 0 and 1 could represent quantity. Boole proved that 0 and 1 could represent logic.

In his book The Mathematical Analysis of Logic, Boole demonstrated that human decision-making could be reduced to algebraic equations. For example, a Logic Gate could dictate that an action only occurs if Condition A is True (1) AND Condition B is True (1). Without Boolean algebra, a computer could store numbers, but it could not process software or make automated decisions.

The Engineering Leap: Claude Shannon and Electronic Binary

Binary code was first used in modern computers in the late 1930s, thanks to Claude Shannon. In his 1937 MIT thesis, Shannon proved that Boolean logic and binary math could be perfectly mapped onto electronic relay switches, bridging the gap between abstract mathematics and physical computing.

When Was Binary Code First Used in Computers?

The theoretical math of Leibniz and Boole sat largely dormant until the dawn of the electronic age. In 1937, Claude Shannon published A Symbolic Analysis of Relay and Switching Circuits. He realized that electrical circuits have two natural states:

- Switch Closed = Current flows (1 / True)

- Switch Open = Current blocked (0 / False)

This was the birth of Information Theory. By the late 1930s and 1940s, early electromechanical computers like the Z3 computer (built by Konrad Zuse) and the ENIAC began utilizing binary logic through vacuum tubes, which were later replaced by the microscopic transistors that power today’s microchips.

Why Do Computers Use Binary Instead of Decimal?

Computers use binary instead of decimal because of hardware reliability and signal-to-noise ratios. It is physically much easier and less error-prone for an electronic circuit to distinguish between two distinct voltage states—”On” (High) and “Off” (Low)—than to measure ten different voltage levels accurately.

The Engineering Reality: Signal-to-Noise

A common question is: Why didn’t engineers just build base-10 (decimal) computers to match human math?

Early computer scientists actually tried. But the physical world is messy. Electricity fluctuates due to heat, interference, and degradation (known as “noise”).

- If a computer uses a Decimal System (Base-10), it has to measure 10 distinct voltage levels (e.g., 0 volts to 9 volts). A slight power drop could cause a “7” to be misread as a “6,” crashing the entire system.

- If a computer uses a Binary System (Base-2), it only needs to detect two states. Anything above a certain voltage threshold is a “1”. Anything below is a “0”.

The Failure of Ternary Computers

Engineers even experimented with Ternary computing (Base-3: Positive, Negative, and Zero), most notably with the Soviet Union’s Setun computer in the 1950s. While mathematically more efficient than binary, ternary computers were too complex and expensive to manufacture at scale. Binary won because it is the most physically robust, error-resistant system for managing electrical currents.

Conclusion: A 5,000-Year Journey

From ancient scholars mapping the universe with solid and broken lines, to Leibniz’s mathematical formalization, to Shannon’s electronic revolution, the history of binary code is the ultimate story of human synthesis. It is the perfect marriage of philosophy, mathematics, and engineering proving that the most complex digital worlds can be built entirely out of the simplest duality: 0 and 1.

Kaleem

My name is Kaleem and i am a computer science graduate with 5+ years of experience in Computer science, AI, tech, and web innovation. I founded ValleyAI.net to simplify AI, internet, and computer topics also focus on building useful utility tools. My clear, hands-on content is trusted by 5K+ monthly readers worldwide.