Most people assume RAM and ROM are just two types of storage. They’re not. One is a workspace. The other is a blueprint. Confusing them is like confusing a whiteboard with a printed instruction manual both hold information, but they serve completely different purposes, and replacing one with the other breaks everything.

Here’s what the textbooks get partially wrong: ROM is no longer read-only. That name is a historical artifact. Modern devices use rewritable ROM variants every day. Understanding why that matters and why the difference between RAM and ROM still holds despite it is what separates a surface-level explanation from one that actually sticks.

The Core Split: Active Workspace vs Permanent Blueprint

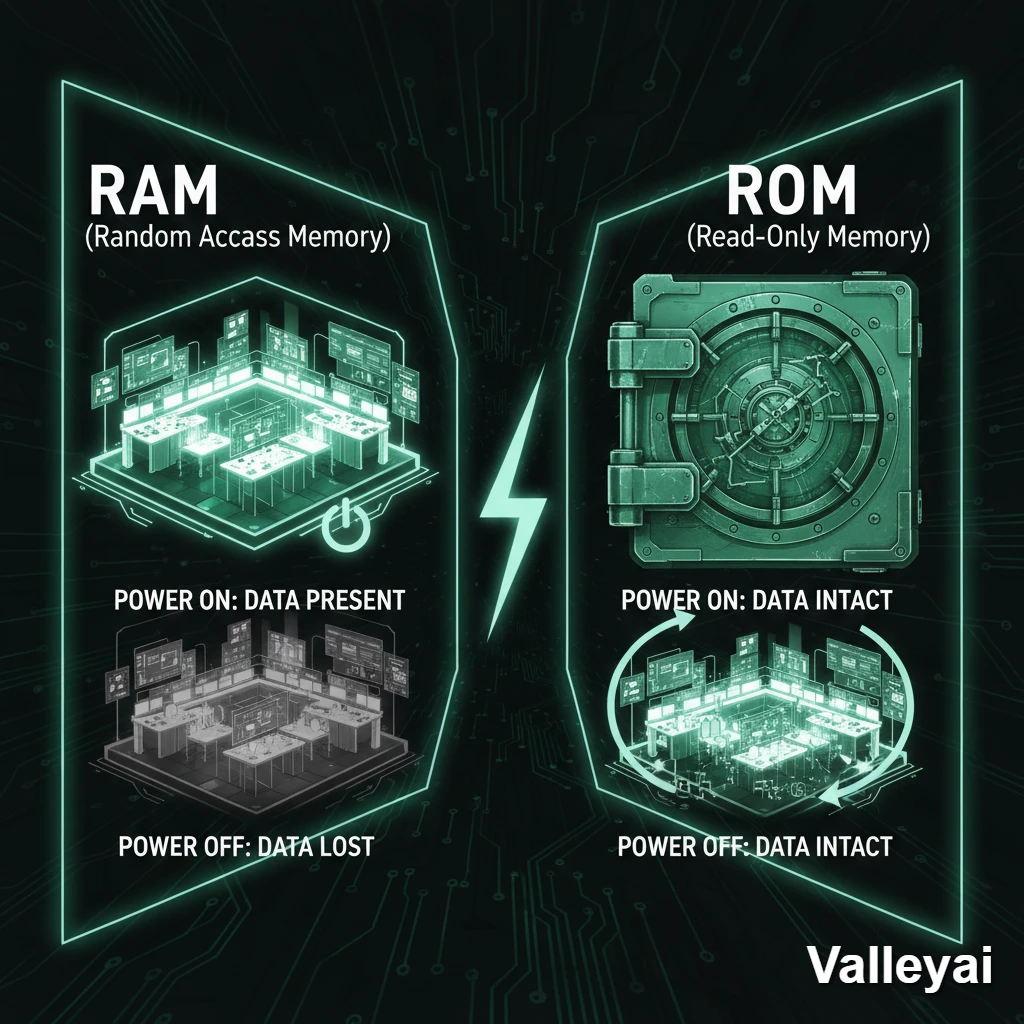

RAM (Random Access Memory) and ROM (Read-Only Memory) occupy different roles in any computing device, and the difference comes down to one fundamental property: volatility.

RAM is volatile. Cut the power, and everything stored in RAM disappears. Instantly. No gradual degradation gone. ROM is non-volatile. Power it down, pull the battery, leave it on a shelf for five years. The data is still there when you turn it back on.

That single property whether data survives without power determines everything else about how these two memory types are used, designed, and sized in modern hardware.

What RAM Actually Does (And Why “Random Access” Is Misnamed)

RAM is the memory your processor uses right now the live working space where active data and running programs sit while the CPU processes them.

When you open a browser, the OS loads it from storage into RAM. When you switch tabs, the browser writes state data to RAM. When you close the browser, RAM clears that space. Everything happens at speeds that secondary storage (SSDs, HDDs) cannot match RAM operates in nanoseconds, while even fast NVMe SSDs operate in microseconds.

The “Random Access” in RAM doesn’t mean the memory stores data randomly. It means any memory address can be accessed in the same amount of time, regardless of where it is physically located. That’s the opposite of sequential-access memory (like magnetic tape), where you had to read past earlier data to reach later data. Random access = direct addressing in constant time. That’s a meaningful technical distinction, not just naming trivia.

What RAM stores:

- The operating system kernel loaded at boot

- Active application code and data

- Browser tabs, open documents, running processes

- Temporary variables the CPU is actively computing

RAM capacity directly determines how many processes can run simultaneously without the OS being forced to use slower storage as overflow (a process called paging or swapping). When paging kicks in, system responsiveness drops noticeably. That’s the real-world consequence of insufficient RAM not data loss, but slowdown.

Most people blame “too little RAM” when their system slows down, but I’ve seen 32GB systems that were still sluggish because the application was single-threaded and CPU-bound. RAM gets blamed for everything. Half the time it’s innocent.

RAM Subtypes: Why SRAM and DRAM Exist Side-by-Side

There are two main RAM architectures, and both are in your device right now just in different places.

| SRAM (Static RAM) | DRAM (Dynamic RAM) | |

|---|---|---|

| Storage mechanism | Flip-flop circuits (transistors) | Capacitors + transistors |

| Refresh required? | No | Yes (capacitors discharge) |

| Speed | Faster (sub-nanosecond access) | Slower (nanoseconds, but slower than SRAM) |

| Density | Low (6 transistors per cell) | High (1 capacitor + 1 transistor per cell) |

| Cost | Expensive | Cheaper |

| Typical use | CPU cache (L1, L2, L3, L4) | Main system RAM (what you buy as “16GB RAM”) |

DRAM requires constant refresh cycles the capacitors that store each bit of data discharge over time, so the memory controller must periodically re-read and re-write every cell to maintain the data. This happens thousands of times per second. It’s why DRAM consumes more power than SRAM and why SRAM is reserved for the smallest, fastest memory tiers (CPU cache) where cost-per-bit is less important than raw speed.

When someone says “my laptop has 16GB RAM,” they mean 16GB of DRAM. The SRAM cache is measured in megabytes and lives inside the CPU die itself.

What ROM Actually Does (And Why the Name Became Misleading)

ROM stores the instructions a device needs before it can do anything else before the OS loads, before any software runs, before the system knows what it is.

On a desktop or laptop, ROM holds the BIOS (Basic Input/Output System) or UEFI firmware the code that runs the moment power reaches the motherboard. It initializes the CPU, checks hardware, and hands control to the bootloader. Without ROM, the system has no starting point. It would power on and immediately have nothing to do.

On a microcontroller in a smart thermostat or industrial sensor, ROM holds the entire program. The device doesn’t have an OS. It has firmware in ROM, a tiny amount of RAM for sensor readings, and that’s it.

ROM is non-volatile because the data it holds must survive power loss by design. A BIOS chip that lost its firmware every time you unplugged the computer would be useless.

What ROM stores:

- Firmware (BIOS/UEFI on computers; embedded programs on microcontrollers)

- Boot instructions

- Hardware initialization sequences

- Factory-configured device parameters

The ROM Naming Paradox: “Read-Only” Memory You Can Write To

This is where most explanations quietly skip a contradiction and hope you don’t notice.

Modern ROM is rewritable. That’s not an exception it’s the norm.

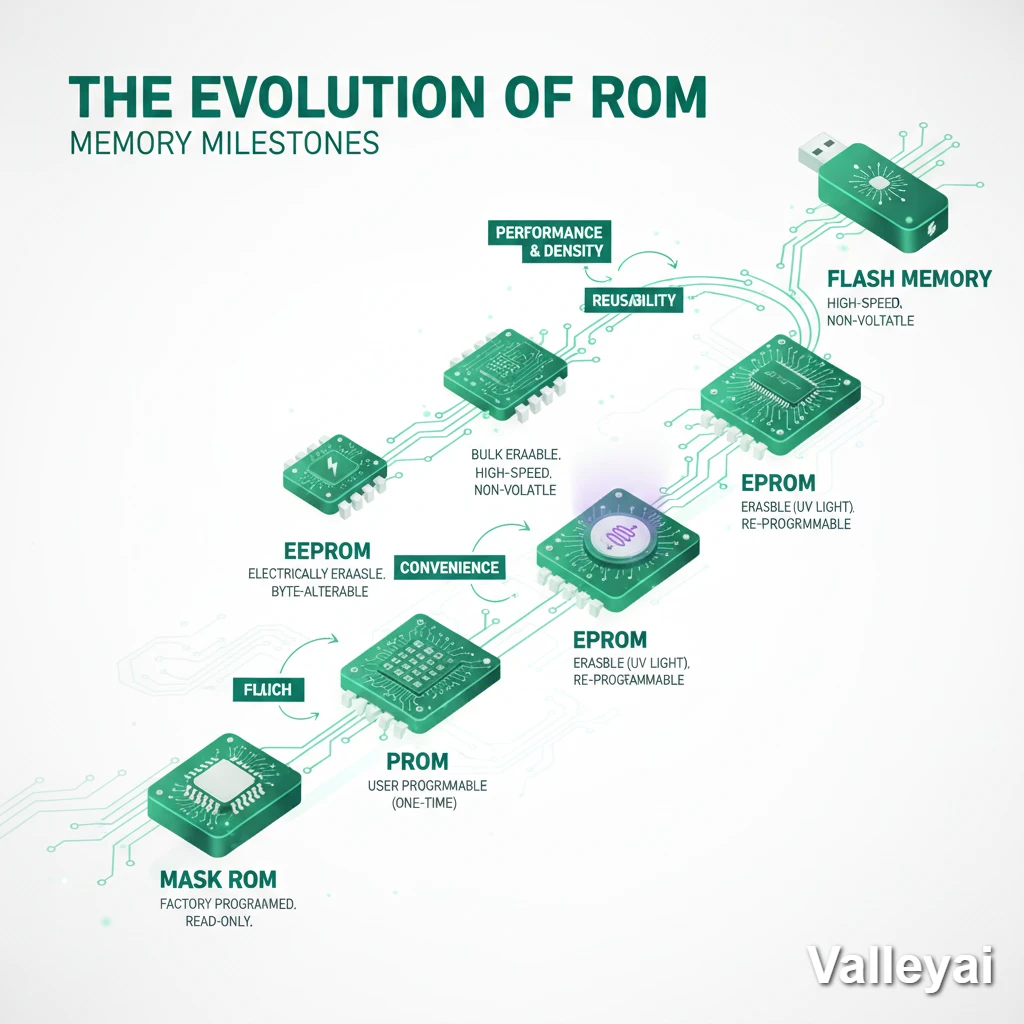

The evolution happened in stages, driven by real engineering constraints:

PROM (Programmable ROM): Original ROM was truly read-only, manufactured with fixed data. PROM allowed one-time programming after manufacture you could write to it once, using high-voltage pulses. After that, read-only.

EPROM (Erasable Programmable ROM): The next step allowed erasure but only by exposing the chip to ultraviolet light through a small quartz window on the package. The UV light discharged the floating-gate transistors that stored data. You’d remove the chip, put it under a UV lamp for 20–30 minutes, then reprogram it. Functional, but inconvenient.

The UV erasure process was genuinely tedious you’d mark chips with a felt-tip pen to block the window after programming, because accidental UV exposure (even from fluorescent lights, over weeks) could corrupt the data. EEPROM wasn’t just a convenience upgrade. It was an engineering mercy.

EEPROM (Electrically Erasable Programmable ROM): Removed the UV requirement. EEPROM can be erased and rewritten electrically, byte-by-byte, without removing the chip. This is what modern BIOS chips use. Firmware updates happen because BIOS is stored in EEPROM — the update process writes new data to the chip while it’s installed.

Flash Memory: A variant of EEPROM optimized for block-level erasure and higher density. Flash is what’s in your SSD, USB drive, smartphone’s internal storage, and SD card. It’s non-volatile, rewritable, and technically a descendant of ROM.

So when someone says “ROM can’t be written to,” they’re describing 1970s PROM. Modern ROM is a family of non-volatile rewritable memory technologies. The “read-only” label survived because the core property non-volatility remained consistent even as the write-restriction changed.

The name stuck. The technology didn’t.

RAM vs ROM: The Definitive Comparison

| Property | RAM | ROM |

|---|---|---|

| Volatility | Volatile (data lost without power) | Non-volatile (data persists without power) |

| Primary function | Active processing workspace | Permanent instruction storage |

| Write behavior | Read and write freely | Originally read-only; modern variants rewritable |

| Access speed | Nanoseconds (DRAM: 10–100ns; SRAM: <1ns) | Microseconds to milliseconds (varies by type) |

| Capacity in modern devices | 8GB–128GB (consumer); TBs (servers) | Kilobytes to megabytes (firmware chips); GBs (Flash/SSD) |

| Data at power-off | Erased | Retained |

| CPU interaction | Direct, constant interaction | Read at boot; rarely accessed during normal operation |

| Cost per GB | Higher than Flash; lower than SRAM | Flash is cheaper than DRAM at scale |

| Typical location | DIMM slots (PC); soldered (mobile) | Motherboard chip (BIOS); embedded in SoC (mobile) |

| Modern examples | DDR5 DIMM modules, LPDDR5 in phones | UEFI BIOS chip, NAND Flash in SSDs |

How RAM and ROM Work Together at Boot

The relationship between RAM and ROM isn’t competitive it’s sequential.

When you press the power button:

- ROM activates first. The CPU reads from the BIOS/UEFI chip (ROM). No RAM is involved yet. The CPU executes firmware instructions directly from ROM.

- ROM initializes RAM. The BIOS/UEFI performs a POST (Power-On Self-Test), which includes testing and initializing RAM modules. At this point, RAM goes from unpowered to functional.

- The bootloader loads into RAM. BIOS/UEFI hands off to the bootloader (stored on the SSD/HDD), which loads the OS kernel into RAM.

- The OS takes over from RAM. From this point forward, the CPU primarily works with data in RAM. ROM is largely idle until the next boot or a firmware update.

Without ROM, step 1 doesn’t happen. Without RAM, step 3 is impossible. They’re not interchangeable they’re complementary stages in a startup sequence.

This is also why corrupted BIOS firmware (ROM) is more catastrophic than a RAM failure. A RAM failure might cause crashes or bluescreens. A corrupted BIOS means the system can’t boot at all it can’t even get to the point of testing RAM.

When RAM Is Actually the Bottleneck (And When It Isn’t)

Most guides stop at definitions. This is the part that actually matters if you’re trying to make a decision about your system.

RAM becomes the limiting factor when your system is running more simultaneous processes than available RAM can hold. The OS compensates by paging moving inactive memory pages to the SSD and retrieving them when needed. Paging is thousands of times slower than RAM access. When paging is active, everything feels sluggish.

RAM is likely your bottleneck if:

- You’re running many applications simultaneously (50+ browser tabs, video editing while running a VM)

- Task Manager (Windows) or Activity Monitor (macOS) shows RAM usage consistently above 85–90%

- You hear your SSD churning during tasks that shouldn’t require much storage access

- System responsiveness drops when switching between applications

RAM is NOT your bottleneck if:

- RAM usage is below 70% and the system is still slow → likely CPU-bound (video encoding, 3D rendering) or GPU-bound

- Storage reads/writes are maxed out → storage is the bottleneck (large file transfers, database operations)

- A single application is slow but RAM usage is low → software optimization issue, not hardware

In practice, if you’re debugging a system that crashes randomly, the first instinct is to blame the hard drive or software but silent RAM failures often manifest as memory corruption that looks exactly like a software bug. Random crashes, data corruption in files, programs that exit without error messages. The hardware diagnostic tool to reach for first is memtest86, not a disk check.

The number of times I’ve watched someone reinstall Windows to fix random crashes that were actually caused by a single failing RAM stick it’s more than I’d like to admit. memtest86 takes 30 minutes. A Windows reinstall takes hours. Run the memory test first. Always.

Adding more RAM to a CPU-bound system changes nothing. Identifying the actual bottleneck before spending money is the diagnostic step most people skip.

RAM and ROM Across Different Devices

The RAM/ROM balance looks dramatically different depending on the device category.

Desktop/Laptop:

Consumer systems typically have 8–64GB DDR5 DRAM as main RAM, a few megabytes of SRAM inside the CPU (L1/L2/L3 cache), and a BIOS/UEFI chip with 32–128MB of EEPROM. The SSD (NAND Flash) is technically a ROM descendant but functions as secondary storage, not traditional ROM.

Smartphone:

A modern flagship might have 12GB LPDDR5 RAM and 256GB UFS NAND Flash storage. The “ROM” in smartphone specs a term still used in some markets refers to internal Flash storage, not traditional ROM. The actual firmware ROM (bootloader, baseband processor code) is separate and much smaller. When a phone “kills background apps,” it’s RAM management the OS is freeing RAM by closing processes you’re not actively using.

IoT / Embedded Systems:

An Arduino Uno has 2KB of SRAM (RAM) and 32KB of Flash (program storage, functioning as ROM). The entire program lives in Flash. The 2KB of SRAM holds only runtime variables. This ratio much more ROM than RAM is typical for devices where the program is fixed and data processing is minimal.

Servers:

Enterprise servers may have TBs of DDR5 ECC RAM (error-correcting RAM that detects and fixes single-bit errors), with ROM limited to IPMI/BMC firmware chips for out-of-band management. The scale is different; the principle is identical.

The Blurring Line: Where RAM and ROM Are Converging

The clean separation between RAM and ROM is becoming less absolute.

NVMe SSDs (non-volatile storage using NAND Flash) are fast enough that some operating systems use them as extended memory through technologies like Microsoft’s DirectStorage and Apple’s Unified Memory Architecture. Apple Silicon chips (M-series) use a unified memory pool shared between CPU and GPU — still volatile, still RAM in behavior, but architecturally different from traditional DIMM slots.

Intel Optane (now discontinued) was a persistent memory technology that combined RAM-like speeds with non-volatile storage blurring the line almost completely. It could function as either fast storage or as a RAM tier that survived reboots.

MRAM (Magnetoresistive RAM) and FRAM (Ferroelectric RAM) are emerging memory technologies that are non-volatile but operate at RAM-like speeds. Neither is mainstream yet, but both challenge the traditional volatile/non-volatile binary.

The fundamental concepts volatile workspace vs. non-volatile persistence remain valid. The specific technologies implementing those concepts are evolving faster than the terminology that describes them.

Frequently Asked Questions

Which is faster, RAM or ROM?

RAM is significantly faster for read and write operations. DRAM (main system RAM) accesses data in 10–100 nanoseconds. SRAM (CPU cache) operates below 1 nanosecond. Traditional ROM (EEPROM) operates in microseconds; NAND Flash (modern ROM descendant) is even slower for random access. For sequential reads, modern NVMe Flash approaches several GB/s, but still slower than DRAM bandwidth.

Is more RAM or ROM better?

They serve different needs. More RAM improves multitasking performance and prevents paging slowdowns. More ROM (storage) means more space for files, applications, and data. If your system is slow under heavy load with high RAM usage, more RAM helps. If you’re running out of space for files, more storage helps. They don’t substitute for each other.

Why do computers need both RAM and ROM?

ROM provides the starting instructions the system needs before anything else can function including RAM initialization. RAM provides the fast, flexible workspace the processor needs during operation. One handles the bootstrap problem; the other handles everything after. A system with only RAM has no way to start. A system with only ROM has no workspace to compute in.

Can ROM be written to?

Modern ROM variants (EEPROM, Flash) can be written to electrically. This is how BIOS firmware updates work the update process writes new data to the EEPROM chip. Traditional PROM (from the 1970s) was truly write-once. EPROM required UV light to erase. The “read-only” name reflects the original design intent, not the current technical reality.

What happens to RAM when you restart?

All data in RAM is lost when power is removed during a restart or shutdown. This is why unsaved work disappears if a computer loses power unexpectedly. The OS saves critical state to storage (hibernation files, swap files) specifically to work around RAM’s volatility.

The Decision That Matters

Understanding RAM and ROM isn’t just a CS exam answer. It’s the framework for diagnosing why a system behaves the way it does why a phone slows down under load, why a corrupted BIOS is more serious than a crashed application, why adding storage doesn’t fix sluggish multitasking.

The distinction is becoming technically blurrier as memory technologies converge. But the underlying logic volatile workspace vs non-volatile persistence maps directly onto how every computing device, from a $5 microcontroller to a $50,000 server, manages the difference between what it’s doing right now and what it needs to remember forever.

That’s a distinction worth understanding precisely.

Kaleem

My name is Kaleem and i am a computer science graduate with 5+ years of experience in Computer science, AI, tech, and web innovation. I founded ValleyAI.net to simplify AI, internet, and computer topics also focus on building useful utility tools. My clear, hands-on content is trusted by 5K+ monthly readers worldwide.