Humanity today is at a new phase of technological advancement, where we’re starting to ask whether a person is needed for a particular task. With the mechanization of jobs, recent technological leaps in AI development are creating models that can mimic human performance beyond mere repetitive logical functions. The process behind this success in AI technology is data annotation and the professionals at a data annotation company.

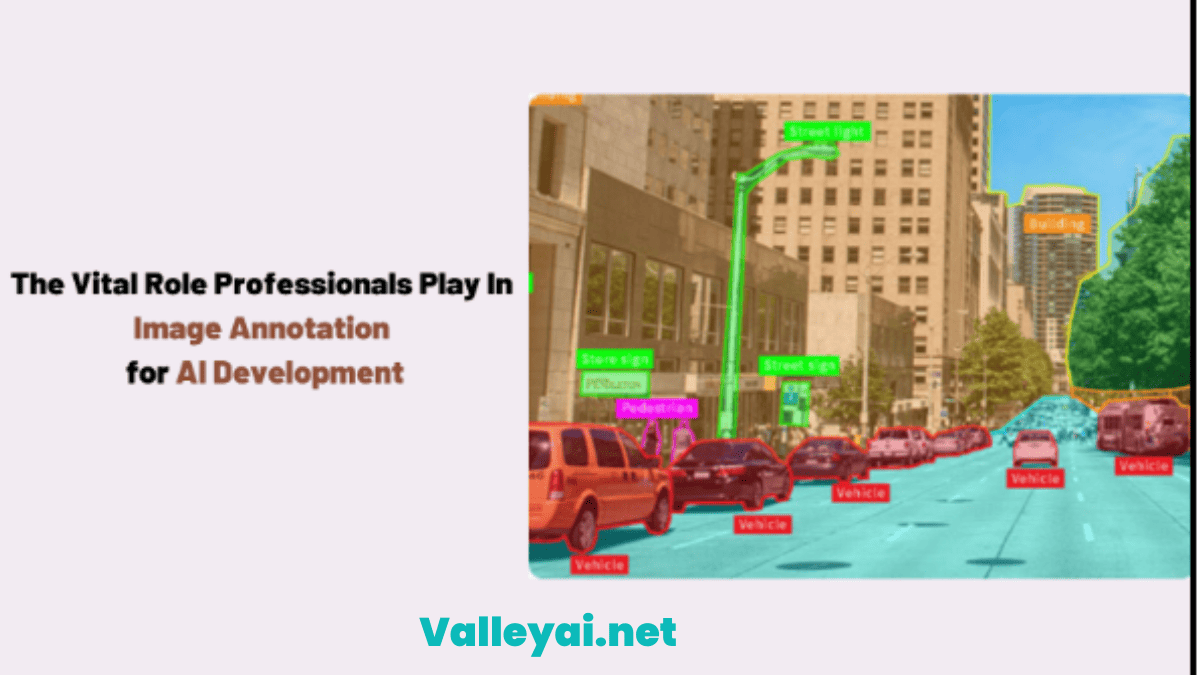

Annotation involves tagging specific elements in data which is used for training AI and Machine Learning algorithms. The types of data used for this process are images (and video by extension), audio, and text. Image annotation gets the most attention as image-based AI can cover a wide array of industrial and consumer applications.

But it is also data-intensive, requiring a lot of data samples to create a functionally accurate AI model. This has led to the need to include automation, with techniques like Deep Learning. Explore Deep learning vs machine learning as well.

This blog explores the reasons why humans still offer better image annotation services than automation, along with a few drawbacks that they have.

Reasons To Opt For Human-Driven Image Annotation Services Over Automation

1. Intuitiveness

A picture may say a thousand words, but every element in it expresses something beyond words. Such emotions that are conveyed through images can only be picked up by humans as machines can’t be programmed to feel. Thus, when it comes to selecting target subjects/objects for tagging during image annotation, an algorithm fails to take into account this emotional factor.

A professional, and an experienced one at that, has the intuitiveness to pick up subtleties in the emotional aspects of an image. This helps them go beyond the required directive and add labels that lead to better training outcomes. Such tagging helps create AI models that can approximate the emotional component in real-world data, thereby adding greater context to their output.

An example of this is when an AI is used to determine if a certain person in a video feed is potentially hostile or not. The additional body language monitoring skills the model may possess; thanks to a human’s intuitiveness being part of its annotated visual training data can help with making that decision more accurately.

2. Ability To Deal With Abstraction

Professionals performing image labeling services don’t always have the luxury of getting to annotate data that they are familiar with. They are also confronted with challenges in their line of work that go beyond what they might have been trained for. An instance of this is a project requiring image annotation to be performed on abstract images.

An image annotation algorithm will be out of depth in these circumstances, but not with humans. People can perceive the image from multiple perspectives and can tag the elements in them accordingly. This ability lets them tag abstract elements in such images or even the abstract concept the image is trying to portray.

This abstraction management not only adds more annotated image data but also new predetermined annotation tags to the library. Any annotation algorithm that may be employed for the process later will thus have a larger tag library from the start, improving its performance too.

An example of this ability in action is annotating images for a generative AI model that produces images itself based on prompts. A user may need it to generate an image in the style of abstract art by their favorite painter. The AI must know about the style and what the user means by the term “abstract art” and what elements create the difference between the preferred artist and their peers to generate the desired output.

3. Creativity and Imagination

Following the previous point on abstraction management, image annotation professionals can also include their creativity and imagination in their work. This factor is one of the biggest positives of humans that machines simply can’t match. All that the latter can do is pseudo-randomly put together a collage of trained data sets when asked to come up with something unique.

In terms of image annotation services, this means algorithms can’t articulate something they are viewing in an image data sample unless they are already familiar with it. Humans, on the other hand, can go above and beyond what is in front of them, accurately identifying and labeling unfamiliar elements in a photo data sample.

This makes contextual understanding of image data and its accurate labeling possible. so, in an example where a photographer is trying to convey his/her imagination through a photo, a person will be in a better place to interpret it accurately compared to a machine.

4. Ability To Collaborate

Teamwork does make the dream work, even when it comes to image annotation. While annotation professionals may be very good at what they do, there will always be times when their knowledge and skills will come up short. At such moments, they will reach out and work with other professionals who are better qualified to tackle the challenge that’s causing the stoppage.

An example of this situation would be an expert image annotator collaborating with a radiologist to annotate X-ray images for a diagnostic AI development project. The annotator will establish a mutually-beneficial working relationship with the radiologist for the duration of the project, and work accordingly to complete it.

This scenario is in stark contrast to what would happen if an ML were faced with a similar developmental roadblock. In all likelihood, it would fail at the point it encountered an element that is beyond the scope of its understanding/awareness. It wouldn’t be able to collaborate with another algorithm for resolving the stalemate, thus grinding progress to a halt.

5. Adaptability

Say a professional at a data annotation company has been tasked with annotating some images that are necessary for developing an AI that will be used for diagnostics. And due to some reason, management decides to change that person’s project, putting them instead in one requiring them to annotate images for vehicle navigation.

With their experience, domain knowledge, and skill, the professional can adapt to the new demands placed on them and deliver what is expected. If they are unfamiliar with the particulars of the domain they have been moved to, they can easily collaborate with someone who has that domain expertise.

Such adaptability to changing circumstances on the fly is simply lacking in the machine-driven annotation. An algorithm is always confined to the training data set used for its development. It cannot change the techniques used to better suit any new demand placed on it unless it’s updated to have such an ability.

Such upgrades are expensive and time-consuming, more so than retraining humans for the new domain. You’d have to develop a new algorithm for the different niches.

Shortfalls of Image Annotation Services By Humans

There is no such thing as free lunch; image annotation performed by humans, as good as it may be, does come with its share of drawbacks. Some of them are manageable, while others are inherent to humanity and can’t be eliminated. You should be aware of these drawbacks of employing humans to provide image labeling services:

1. Can Only Work On A Limited Dataset

Annotation is a data-exhaustive process, sometimes requiring thousands to millions of images to get an acceptable level of accuracy in the final AI model. Such high volumes of data are simply impractical for people to work on in an acceptable time frame. You or the professional agency should have a lot of professionals to get the job done on time. Algorithms can tirelessly work on any amount of image data.

2. Can’t Multitask

Multitasking may be the buzzword of every workplace, but humans are inherently wired to focus on one task at a time. When this limit is crossed and an image annotator gets overwhelmed with multiple demands at once, they won’t be able to cope. This leads to errors and/or stress for the professionals.

In contrast, ML algorithms can work on multiple annotation tasks simultaneously, provided they are developed that way. Project turnaround times are reduced as a result of letting algorithms handle the annotation while maintaining output accuracy levels.

3. May Deviate From Requirements

This point is both a good and bad thing about having humans annotate images. Expert annotators may feel the need to add their input to a project that is not present in the brief. If it isn’t beneficial, it is likely to end up having adverse effects for all involved.

4. Are Resource-Intensive

An annotation algorithm doesn’t demand payment or other types of resources the way a human does. Your costs will include the subscription amount you have to pay an external agency, or if you’re doing it in-house, the cost of IT infrastructure. The amenities offered to people for providing image annotation services can add up over time, especially if the ROI doesn’t compensate for it.

5. Slower Turn-Around Times

Humans can’t work continuously for long periods and require frequent breaks. Algorithms on the other hand can go on non-stop throughout the day and night. This speeds up turnaround times tremendously.

6. Prone To Making Mistakes

No matter how good a professional annotator is, there is always a strong chance that they will make an error in their job. There are several reasons for this, with tiredness, state of mind, and heavy workload being the most prominent ones. An algorithm doesn’t struggle with such issues and will provide error-free output, except under the rare circumstance that it suffers from an unforeseen glitch.

Conclusion

Technological development is pushing new boundaries each day, with AI adoption for businesses being a visible example. Your company can’t afford to miss out on the advantages offered by AI when performing many tasks, especially those containing visual data processing. By opting for image labeling services done by experts, you get to remain on par with those technological advancements, pushing the boundaries of what your company can accomplish.

Kaleem

My name is Kaleem and i am a computer science graduate with 5+ years of experience in Computer science, AI, tech, and web innovation. I founded ValleyAI.net to simplify AI, internet, and computer topics also focus on building useful utility tools. My clear, hands-on content is trusted by 5K+ monthly readers worldwide.